SRP100604 and SRP268884 have been uploaded and fastq files created.

SRA links:

https://www.ncbi.nlm.nih.gov/sra?term=SRP100604

https://www.ncbi.nlm.nih.gov/sra?term=SRP268884

Code Example

prefetch.slurm

#! /bin/bash

#SBATCH --job-name=prefetch_SRR

#SBATCH --partition=Orion

#SBATCH --nodes=1

#SBATCH --ntasks-per-node=1

#SBATCH --mem=4gb

#SBATCH --output=%x_%j.out

#SBATCH --time=24:00:00

cd /nobackup/tomato_genome/alt_splicing/SRP100604

module load sra-tools/2.11.0

vdb-config --interactive

files=(

SRR5279858

SRR5279875

SRR5279883

SRR5280323

SRR5280370

SRR5280382

SRR5280383

SRR5280392

SRR5282476

SRR5282478

SRR5282480

SRR5282481

)

for f in "${files[@]}"; do echo $f; prefetch $f; done

fasterdump.slurm

#! /bin/bash

#SBATCH --job-name=fastqdump_SRR

#SBATCH --partition=Orion

#SBATCH --nodes=1

#SBATCH --ntasks-per-node=1

#SBATCH --mem=40gb

#SBATCH --output=%x_%j.out

#SBATCH --time=24:00:00

#SBATCH --array=1-12

#setting up where to grab files from

file=$(sed -n -e "${SLURM_ARRAY_TASK_ID}p" /nobackup/tomato_genome/alt_splicing/SRP100604/Sra_ids.txt)

cd /nobackup/tomato_genome/alt_splicing/SRP100604

module load sra-tools/2.11.0

echo "Starting faster-qdump on $file";

cd /nobackup/tomato_genome/alt_splicing/SRP100604/$file

fasterq-dump ${file}.sra

perl /projects/tomato_genome/scripts/validateHiseqPairs.pl ${file}_1.fastq ${file}_2.fastq

cp ${file}_1.fastq /nobackup/tomato_genome/alt_splicing/SRP100604/${file}_1.fastq

cp ${file}_2.fastq /nobackup/tomato_genome/alt_splicing/SRP100604/${file}_2.fastq

echo "finished"

Comments on results

Directory: /nobackup/tomato_genome/alt_splicing/SRP100604

SRP100604: There were some SRR files that were not double stranded but were single stranded so it could not make _1.fastq and _2.fastq files.

List of those SRR's-

SRR5282476

SRR5282478

SRR5282480

SRR5282481

Directory: /nobackup/tomato_genome/alt_splicing/SRP268884

SRP268884: Produces all double stranded _1.fastq and _2.fastq files.

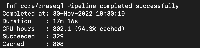

Next Step: Run Nextflow rnaseq/nf-core pipeline on SRP268884.

Question: Should we still use SRP100604 if it contains single stranded SRR files or just use the double stranded files that it contained?

[~aloraine]

aloraine's answer to the above query: Go ahead and use all the available data in SRP100604. I believe that nextflow is able to handle this complication intelligently. I think you can omit the "second" file name in the "samples" file for single end runs. (Please note that "single strand" is not the same thing as "single end" - make sure that we are talking about the same thing before proceeding.)

Task

Major

SRP100604 and SRP268884 have been uploaded and fastq files created.

SRA links:

https://www.ncbi.nlm.nih.gov/sra?term=SRP100604

https://www.ncbi.nlm.nih.gov/sra?term=SRP268884

Code Example

prefetch.slurm

#! /bin/bash #SBATCH --job-name=prefetch_SRR #SBATCH --partition=Orion #SBATCH --nodes=1 #SBATCH --ntasks-per-node=1 #SBATCH --mem=4gb #SBATCH --output=%x_%j.out #SBATCH --time=24:00:00 cd /nobackup/tomato_genome/alt_splicing/SRP100604 module load sra-tools/2.11.0 vdb-config --interactive files=( SRR5279858 SRR5279875 SRR5279883 SRR5280323 SRR5280370 SRR5280382 SRR5280383 SRR5280392 SRR5282476 SRR5282478 SRR5282480 SRR5282481 ) for f in "${files[@]}"; do echo $f; prefetch $f; donefasterdump.slurm

Comments on results

Directory: /nobackup/tomato_genome/alt_splicing/SRP100604

SRP100604: There were some SRR files that were not double stranded but were single stranded so it could not make _1.fastq and _2.fastq files.

List of those SRR's-

SRR5282476

SRR5282478

SRR5282480

SRR5282481

Directory: /nobackup/tomato_genome/alt_splicing/SRP268884

SRP268884: Produces all double stranded _1.fastq and _2.fastq files.

Next Step: Run Nextflow rnaseq/nf-core pipeline on SRP268884.

Question: Should we still use SRP100604 if it contains single stranded SRR files or just use the double stranded files that it contained?

[~aloraine]

aloraine's answer to the above query: Go ahead and use all the available data in SRP100604. I believe that nextflow is able to handle this complication intelligently. I think you can omit the "second" file name in the "samples" file for single end runs. (Please note that "single strand" is not the same thing as "single end" - make sure that we are talking about the same thing before proceeding.)